on ALL of the Storage Pod versions. Click Here.

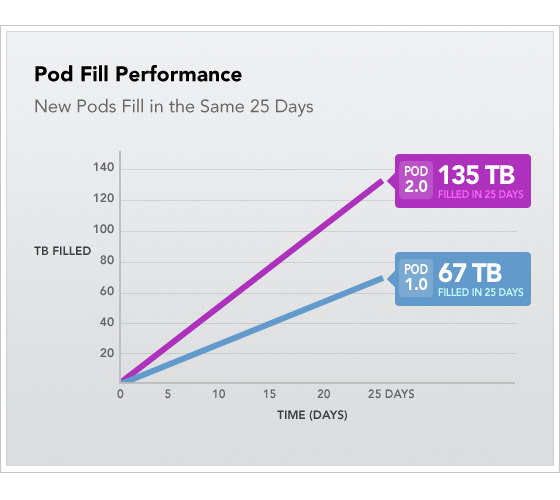

It’s been over a year since Backblaze revealed the designs of our first generation (67TB) Storage Pod. During that time, we’ve remained focused on our mission to provide an unlimited online backup service for $5 per month. To maintain profitability, we continue to avoid overpriced commercial solutions, and we now build the Backblaze Storage Pod 2.0: a 135TB, 4U server for $7,384. It’s double the storage and twice the performance—at a lower cost than the original.

In this post, we’ll share how to make a 2.0 Storage Pod, and you’re welcome to use the design. We’ll also share some of our secrets from the last three years of deploying more than 16PB worth of Backblaze Storage Pods. As before, our hope is that others can benefit from this information and help us refine the Pods. (Some of the enhancements are contributions from helpful kindred Pod builders, so if you do improve your Backblaze Pod farm, please balance the karma and send us your suggestions!)

Quick Review: What Makes a Backblaze Storage Pod?

A Backblaze Storage Pod is a self-contained unit that puts storage online. It’s made up of a custom metal case with commodity hardware inside. You can find a parts list in Appendix A. You can also link to a power wiring diagram, see an exploded diagram of parts, and check out a half-assembled Pod. The two most noteworthy factors are that the cost of the hard drives dominates the price of the overall Pod and that the system is made entirely of commodity parts. For more background, read the original blog post. Now let’s talk about the changes.

Density Matters: Double the Storage in the Same Enclosure

We upgraded the hard drives inside the 4U sheet metal Pod enclosure to store twice as much data in the same space. After the cost of filling a rack with Pods, one data center rack containing 10 Pods costs Backblaze about $2,100 per month to operate, roughly divided equally into thirds for physical space rental, bandwidth, and electricity. Doubling the density saves us half of the money spent on both physical space and electricity. The picture below is from our data center, showing 15PB racked in a single row of cabinets. The newest cabinets squeeze 1PB into three-quarters of a single cabinet for $56,696.

Our online backup cloud storage is our largest cost, and we are obsessed with providing a service that remains secure, reliable, and above all, inexpensive. We’ve seen competitors unable to react to these demands who were forced to exit the market, like Iron Mountain, or raise prices, like Mozy and Carbonite. Controlling the hardware design has allowed us to keep prices low.

We are constantly looking at new hard drives and evaluating them for reliability and power consumption. The Hitachi 3TB drive (Hitachi Deskstar 5K3000 HDS5C3030ALA630) is our current favorite for both its low power demand and astounding reliability. The Western Digital and Seagate equivalents we tested saw much higher rates of popping out of RAID arrays and drive failure. Even the Western Digital Enterprise hard drives had the same high failure rates. The Hitachi drives, on the other hand, perform wonderfully.

Twice as Fast

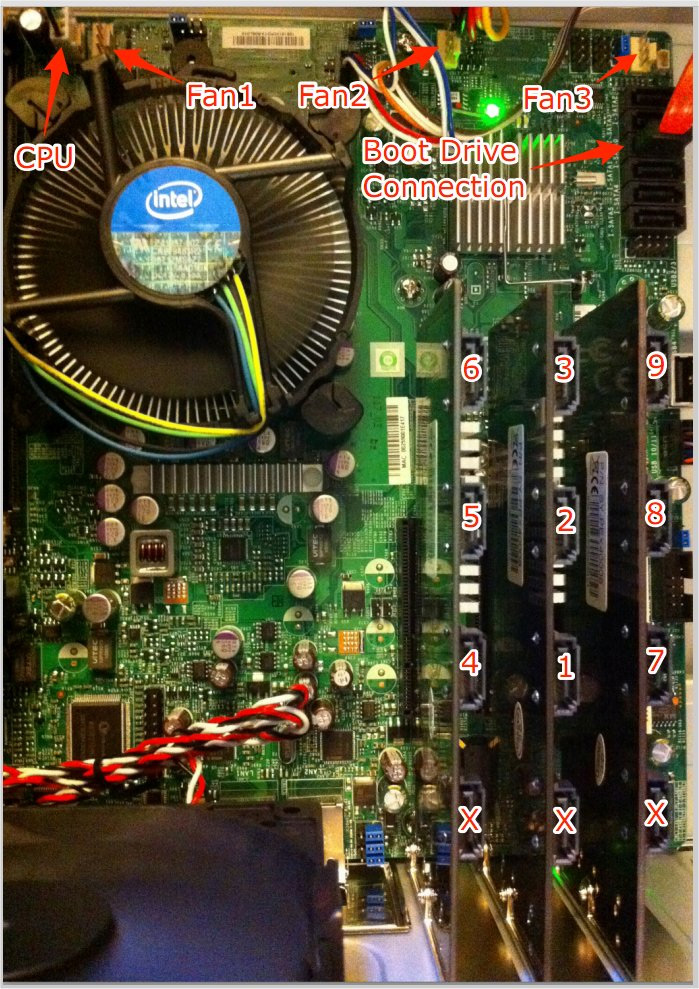

We’ve made several improvements to the design that have doubled the performance of the Storage Pod. Most of the improvements were straightforward and helped by Moore’s Law. We bumped the CPU up from the Intel dual core CPU to the Intel i3 540 and upgraded the motherboard from one gigabit ethernet port to a Supermicro motherboard with two gigabit ethernet ports. RAM dropped in price, so we doubled it to 8GB in the new Pod. More RAM enables our custom Backblaze software layer to create larger disk caches that can really speed up certain types of disk I/O.

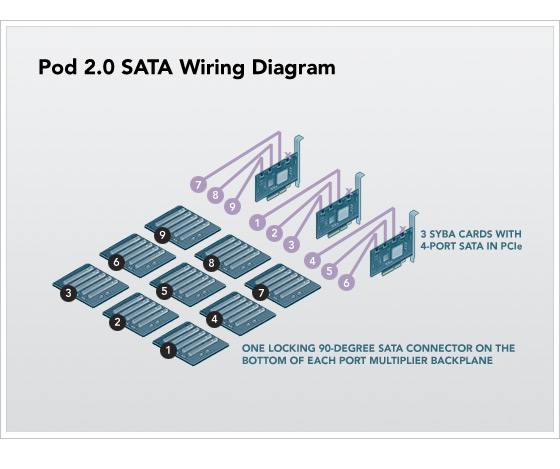

In the first generation Storage Pod, we ran out of the faster PCIe slots and had to use one slower PCI slot, creating a bottleneck. Justin Stottlemyer from Shutterfly found a better PCIe SATA card, which enabled us to reduce the SATA cards from four to three. Our upgraded motherboard has three PCIe slots, completely eliminating the slower PCI bottleneck from the system. The updated SATA wiring diagram is seen below. Hint: The Pod will work if you connect every port multiplier backplane to a random SATA connection, but if you wire it up as shown below, the 45 drives will appear named in sequential order.

We upgraded the Linux 64-bit OS from Debian 4 to Debian 5, but we no longer use JFS as the file system. We selected JFS years ago for its ability to accommodate large volumes and low CPU usage, and it worked well. However, ext4 has since matured in both reliability and performance, and we realized that with a little additional effort we could get all the benefits and live within the unfortunate 16TB volume limitation of ext4. One of the required changes to work around ext4’s constraints was to add LVM (Logical Volume Manager) above the RAID 6 but below the file system. In our particular application (which features more writes than reads), ext4’s performance was a clear winner over ext3, JFS, and XFS.

With these performance improvements, we see the new Storage Pods in our data center accepting customer data more than twice as fast as the older generation Pods. It takes approximately 25 days to fill a new Pod with 135TB of data. The chart below shows the measured fill rates of an old Pod versus a new Pod, both under real-world maximum load in our data center.

Please note: The above graph is not the benchmarked write performance of a Pod; we have easily saturated the gigabit pipes copying data from one Pod to another internally. This graph shows Pods running in production, accepting data from thousands of simultaneous and independent desktop machines running Windows and Mac OS, where each desktop is forming HTTPS connections to the Tomcat web server and pushing data to the Pod. At the same time, as customers are preparing restores that read data off those drives, there are system cleanup processes running, occasional RAID repairs, etc. In this end-to-end measurement, the new Pods are twice as fast in our environment.

Lessons Learned: Three Years, 16PB and Counting

Backblaze is employee owned (with no VC funding or other deep pockets), so we have two choices: 1) stay profitable by keeping costs low or 2) go out of business. Staying profitable is not just about upfront hardware costs; there are ongoing expenses to consider.

One of the hidden costs to a data center is the headcount (salary) for the employees who deploy Pods, maintain them, replace bad drives with good, and generally manage the facility. Backblaze has 16PB and growing, and we employ one guy (Sean) whose full time job is to maintain our fleet of 201 Pods, which hold 9,045 drives. Typically, once every two weeks, Sean deploys six Pods during an eight-hour work day. (He gets a little help from one of us to lift each Pod into place because they each weigh 143 pounds.)

Our philosophy is to plan for equipment failure and build a system that operates in spite of it. We have a lot of redundancy, ensuring that if a drive fails, immediate replacement isn’t critical. So at his leisure, Sean also spends one day each week replacing drives that have gone bad. As of this week, Backblaze has more than 9,000 hard drives spinning in the data center, the oldest of which we purchased four years ago. We see fairly high infant mortality on the hard drives deployed in brand new Pods, so we like to burn the Pods in for a few days before storing any customer data. We have yet to see any drives die because of old age, which will be fascinating to monitor in the next few years. All told, Sean replaces approximately 10 drives per week, indicating a 5% per year drive failure rate across the entire fleet, which includes infant mortality and also the higher failure rates of previous drives. (We are currently seeing failures in less than 1% of the Hitachi Deskstar 5K3000 HDS5C3030ALA630 drives that we’re installing in Pod 2.0.)

We monitor the temperature of every drive in our data center through the standard SMART interface, and we’ve observed in the past three years that: 1) hard drives in Pods in the top of racks run three degrees warmer on average than Pods in the lower shelves; 2) drives in the center of the Pod run five degrees warmer than those on the perimeter; 3) Pods do not need all six fans—the drives maintain the recommended operating temperature with as few as two fans; and 4) heat doesn’t correlate with drive failure (at least in the ranges seen in Storage Pods).

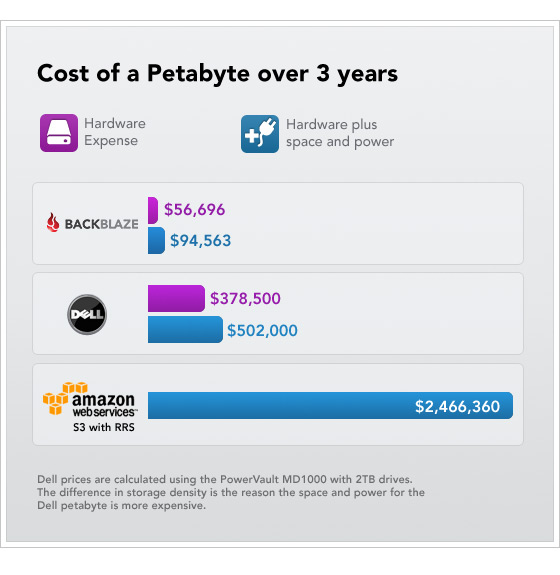

One important note: Because all of the parts (including drives) in the Backblaze Storage Pod come with a three-year warranty, we rarely pay for a replacement part. The drive manufacturers take back failed drives with “no questions asked” and send free replacements. If you figure that storage resellers, such as NetApp and EMC, tack on a three-year support fee, a petabyte of Backblaze storage costs less than their support contract alone. A chart below takes all of our experience into account and shows what it costs to own and maintain a petabyte of storage for three years:

In the chart above, the economies of scale only kick in if you really do need to store a full petabyte or more. For a small amount of data (a few terabytes), Amazon S3 could easily save money, but the Amazon option is clearly a dubious financial choice for a company with large, multi-petabyte storage needs.

Final Thoughts

The Backblaze Storage Pod is just one building block in making a cloud storage service. If all you need is cheap storage, this may suffice. If you need to build a reliable, redundant, monitored storage system, you’ve got more work ahead of you. At Backblaze we’ve developed software that manages and monitors the cloud service, proprietary technology that we’ve developed over the years.

We offer our Storage Pod design free of any licensing or any future claims of ownership. Anybody is allowed to use and improve upon it. You may build your own cloud system and use the Backblaze Storage Pod as part of your solution. The steps to assemble a Storage Pod, including diagrams, can be found on our original blog post, and an updated list of parts is provided below in Appendix A. We don’t sell the design, so we don’t provide support or a warranty for people who build their own. To all of those builders who take up the challenge, we’d love to hear from you and welcome any insights you provide about the experience. And please send us a photo of your new 135TB Pod.

Appendix A: Price List:

Hitachi 3TB 5400 RPM HDS5C3030ALA630

Zippy PSM-5760 Power Supply

Available in quantities of 9 for $47 from (CFI Group) CFI-B53PM 5 Port Backplane (SiI3726)

Syba PCI Express SATA II 4-Port RAID Controller Card SY-PEX40008

SuperMicro MBD-X8SIL-F-B

Mechatronics G1238M(OR E)12B1-FSR 12V 3-Wire Fan

Crucial CT25672BA1339 2GB, DDR3 PC3-10600 (4x 2GB = 8GB total)

Western Digital Caviar Blue WD1600AAJS 160GB 7200 RPM

Newegg GC36AKM12 3 Foot SATA Cable

Fastener SuperStore 1/4? Round Nylon Standoffs Female/Female 4-40 x 3/4?

Aero Rubber Co. 3.0 x .500 inch EPDM (0.03? Wall)

Vantec VDK-PSU Power Supply Vibration Dampener

Acoustic Ultra Soft Anti-Vibration Fan Mount AFM02

Acoustic Ultra Soft Anti-Vibration Fan Mount AFM03

Small Parts MPN-0440-06P-C Nylon Pan Head Phillips 4-40 x 3/8?

House of Foam 16? x 17? x 1/8? Foam Rubber Pad

Custom wiring harnesses for PSU1 and PSU2 (the Zippy power supplies):

See detailed wiring harness diagrams.

SATA Chipsets

SiI3726 on each port multiplier backplane to attach five drives to one SATA port.

SiI3124 on three PCIe SATA cards. Each PCIe card has four SATA ports on it, although we only use three of the four ports.